Contents

The world before Gen AI video: what was already working

How the technology actually works and what it costs

The prompt is the product, and it tells you everything

The regional split nobody is talking about

What high-intent users actually do differently

What was intended and where it went wrong at scale

Who survives and the one problem nobody has solved

What this means for consumers and builders

Sora Is Just the Beginning. Why Most Gen AI Video Models Will Wind Down

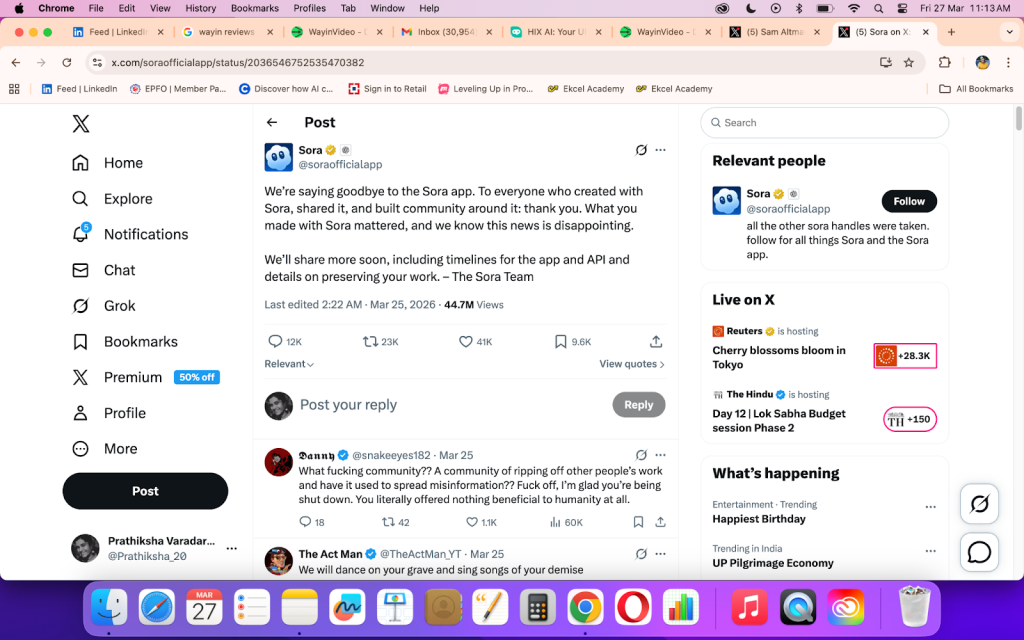

On March 24, 2026, OpenAI quietly shut down Sora, the AI video generator it had launched to global fanfare barely a year earlier. The official reason for it to be said as that they would focus on "world simulation research" to support robotics. The real reason was a little different. Sora was expensive to run, hard to monetise, and losing ground to products with far better unit economics.

Most coverage of this moment will frame it as a surprising reversal. I don't think it's surprising at all. In fact, I think Sora is the first of many.

I am not writing this as neutral observers. I was part of building an AI video product. I was responsible for launching it and watched what happened. From the learning of real users, real prompts, regional data and real revenue I learnt is that the structural problems that killed Sora are not unique to OpenAI. They are present in almost every general-purpose AI video platform operating today.

This is what we saw.

The world before Gen AI video: what was already working

To understand where Gen AI video went wrong, we need to understand what it was trying to replace.

A Hollywood feature film, a national TV commercial, a corporate training video, a social media reel, and a news broadcast are not the same product at different price points. They are fundamentally different things, produced by different people, for different reasons, under different quality standards.

A corporate training video values clarity, multilingual accessibility, and the ability to update when a product changes. A social media clip values immediate visual impact, format compatibility, and the ability to produce at volume. A feature film values performance, narrative continuity, and emotional truth that audiences feel rather than analyse.

Each segment had its own definition of quality. A training video that would embarrass a film director was perfectly adequate for its purpose. A social clip that would get a brand manager fired was exactly what a creator needed.

Gen AI video arrived claiming it could serve all of these at once. That was the first mistake.

How the technology actually works and what it costs

The mechanism behind Gen AI video is elegant in theory. The model starts with pure random noise (remember a television screen with no signal?) and is trained to gradually subtract that noise, guided by a text description, until coherent frames emerge. Do this across many frames simultaneously, with the model learning how objects, light, and motion behave over time, and you get video.

What this description obscures is the cost.

- Generating a minute of AI video at frontier quality consumes roughly 2 to 10 watt-hours of energy

- It requires a high-end GPU running for several minutes, and costs the provider between $0.50 and $2.00 in raw compute

- Then comes the costs involved in infrastructure, staffing and the cost of training the model itself, which runs into tens of millions of dollars per major version.

Most consumer-facing AI video platforms launched at price points well below this cost. Some offered free tiers with generous credit allowances. The logic was the same logic that drove early cloud computing and early streaming: lose money now, acquire users, optimize costs later, convert free users to paid at scale.

This logic requires conversion. And conversion requires that the free user eventually derives enough value to pay. What the platforms discovered and what we discovered at Vmaker AI is that the majority of free users had no intention of ever paying, because they had no downstream use for the video they were generating.

The prompt is the product, and it tells you everything

Here is something no analyst can tell you from the outside, because it requires access to actual prompt data: the difference between a user who will pay and a user who will not is visible in the first thing they type.

At Vmaker AI, we observed a stark and consistent pattern across our user base. Paying users (the ones who converted), exported, and came back. They wrote prompts that looked nothing like what you would expect from an "AI video" product. The prompts were cleanly operational.

Paying users wrote prompts like briefs: "Create an informational video on AI in the workplace for LinkedIn." Free users wrote prompts like dreams: "A cat flying through Mars with a spaceship in the background."

The paying user already knew what the video was for. They had a platform in mind. They had an audience in mind. They had use cases like informational content on trending topics, product explainers, knowledge videos for social channels. Their prompt was a clean work instruction and not a creative experiment.

The free user was exploring the boundary of what the technology could imagine. That is a legitimate and interesting thing to do. It is not, however, a behaviour that generates revenue. The exploration prompt produces an output that has nowhere to go. No platform, no audience, no downstream purpose.

What made this finding actionable was the next observation: the users who published their video also upgraded. The correlation was strong enough to become a product principle for us. The act of publishing the AI output and putting it in front of a real audience was the clearest signal of intent we had. Users who stopped at generation never converted. Users who got to publication almost always did.

Publish → Upgrade the strongest conversion signal we found. Users who published their video converted to paid at dramatically higher rates than those who generated but did not publish.

This has a direct implication for how AI video platforms should be designed. The goal should not be to maximise generation volume. It should be to minimise the distance between generation and publication. Every feature, every UX decision, every prompt template should be oriented toward getting the user to a publishable output as fast as possible. That is where economic value lives.

The regional split nobody is talking about

The second pattern we observed was geographic and it is one we have not seen discussed anywhere in the public conversation about AI video adoption.

Usage and value did not correlate by region. They inverted.

Users from APAC-dominated markets generated high volumes of content. But their prompt behaviour reflected the same exploratory, non-specific pattern untethered to a publishing destination. The result was high usage, high compute cost, and low download rates. These users were engaging with the product not as a tool but as a novelty.

Users from North America, the EU, and Australia showed the opposite pattern. Sharper prompts. Specific use cases. Clear platform intent. And they downloaded and published at dramatically higher rates.

65% of all downloaded videos on Vmaker AI came from North American and European users despite those regions representing a smaller share of total generations.

This matters beyond the economics of our specific product. It suggests that the creator economy's maturity in a region directly affects whether AI video tools generate real output or just generate activity. In markets where creators already have an established relationship with platforms, audiences, and content formats, AI video slips naturally into an existing workflow. In markets where that infrastructure is still developing, AI video becomes entertainment that is interesting to try, not useful enough to publish.

The implication for platforms burning compute on global free tiers is significant. Not all usage is equal. A generation from a user with a publishing destination is worth multiples of a generation from a user who is curious about what the technology can do. Treating them identically in your cost model is how you arrive at Sora's economics.

What high-intent users actually do differently

Beyond the prompt and the geography, there was a third dimension that separated our high-value users from the rest: what they did after the first generation.

Low-intent users treated AI output as a finished product. They generated, evaluated, and either kept it or discarded it. The interaction was transactional and shallow.

High-intent users treated AI output as a starting point. They customised. They added branding. They layered in additional footage alongside the AI-generated content rather than relying on it exclusively. They were comfortable and often enthusiastic about connecting their social channels for direct publishing from within the platform.

The highest-value users did not blindly use the AI output. They collaborated with it. The AI gave them a foundation; they made it theirs.

This behavioural signature has a name in product development: it is the difference between a user and a creator. Users consume outputs. Creators transform them. The creator behaviour patterns of customizing, branding, augmentation, publishing is what separates a platform with sustainable retention from one with a leaky free tier.

It also explains why constraining the use case improved our outcomes. When we narrowed to vertical social video, we attracted users who already had a social media workflow. Those users already knew how to take a piece of content and make it their own. The format constraint filtered for the creator behaviour pattern almost automatically.

What was intended and where it went wrong at scale

The platforms that launched general-purpose AI video (Sora included) were not naïve about their audience. They intended to serve creative professionals: marketers, filmmakers, content studios, agencies with defined outputs and the skills to prompt effectively.

What they failed to anticipate was what happens when you make a powerful, low-friction tool free and point it at the internet. The intended audience showed up. So did everyone else.

The majority of early AI video usage had no commercial application. Our support queues filled with requests for outputs that had no economic equivalent. They were scenes from private imagination, concepts that existed only as creative experiments, video equivalents of dreams. Content trended toward NSFW categories in volumes that created moderation costs on top of compute costs.

It is like helping someone bring their dream to life. But your dream does not necessarily have any economic value.

This was a failure of product-market fit at the category level. The platforms built tools that were most compelling to the users least likely to pay, and least compelling to the users most likely to pay.

At Vmaker AI, less than 20% of our open beta users found the output genuinely useful for their actual needs. The rest were exploring, experimenting, and in many cases exploiting the free compute allocation with content that had nowhere to go.

Sora's $155 million EBITDA loss in 2024, on roughly $44 million in recognised revenue, is the arithmetic of this dynamic at OpenAI scale. It's actually not a management failure. It is the predictable outcome of giving away a computationally expensive product to an audience that cannot convert.

Why Sora will not be the last

The conditions that led to Sora's shutdown are structural to the general-purpose AI video category, not specific to OpenAI.

Any model offering open-ended video generation at low cost will attract the same user distribution: a large majority with no commercial intent, and a small minority with genuine need. The majority drives cost. The minority drives revenue.

The platforms most at risk share a recognisable profile: large rounds raised on impressive demo reels, high monthly active user counts, high compute infrastructure, and monthly paying user rates below 5%. The gap between demo virality and subscription economics is where this category will continue to break.

OpenAI is shutting down Sora is not the failure of technology. It is shutting it down because the company is heading toward an IPO and cannot justify a product losing more than three dollars for every dollar it earns, especially when those compute resources could support ChatGPT, Codex, and enterprise API products with far better margins. The same calculation is coming for others. The only variable is timing.

Who survives and the one problem nobody has solved

The companies building durable businesses in AI video share a single characteristic: they constrained the use case before the market forced them to.

Synthesia sells AI avatar video for corporate training and communications. It does not try to generate beach sunsets or car chases. It generates a person speaking to camera, in 140 languages, in your brand style, updateable in minutes. The use case is defined, the quality bar is predictable, and the willingness to pay is established.

Runway has survived by building a workflow platform for creative professionals. People who already know what they want, have the skills to prompt effectively, and have downstream commercial use for the output. Its camera controls, inpainting, and video extension tools are not features meant for the general consumer but features for the working filmmaker who needs to stay inside one platform.

The pattern is consistent: the survivors are not the ones with the most impressive general-purpose demos. They are the ones who answered the question nobody else wanted to ask. Who specifically is paying for this, for what output, and why is that output worth more to them than the cost of producing it?

We should be honest about what even the survivors have not solved. At Vmaker AI, our most satisfied users still tell us the same thing: they have a specific style in mind that the model cannot quite reach. Vertical optimisation solved the format problem. It did not solve the individual aesthetic problem. Every creator has a visual identity, a pace, a colour palette, a cut style that lives in their head and cannot yet be transmitted through a prompt.

The gap between "a video that meets the format" and "a video that feels like mine" is the next frontier. Some of it will be closed by better personalisation by learning from a user's publishing history, their brand assets, their previous outputs. Some of it will require editing tools that let the creator close the last mile themselves. Some of it will simply remain unsolved for longer than the industry's optimism suggests.

What this means for consumers and builders

The consolidation beginning now will not be bad for consumers. It will be clarifying.

The era of "AI video can do anything" will give way to an era of AI video tools that do specific things exceptionally well. Corporate video teams will have purpose-built tools integrated into their workflow. Social media creators will have tools designed for their formats and publishing cadence. Filmmakers will have tools that augment pre-production and post-production without pretending to replace the camera.

The general consumer who signed up for Sora hoping for a magic video wand will be disappointed. But the marketing manager who needs 50 variants of a product video, the L&D team that needs a training module updated overnight, the creator who needs a week's worth of vertical content by Tuesday morning — these users will be better served by what comes next.

For builders, the lesson is not that AI video is a bad business. It is that AI video as a commodity served to everyone, priced near zero, with no defined use case is a bad business. The same technology applied to a specific problem, for a specific user who derives measurable value, is a very good business.

The signal is in the prompt. The signal is in the geography. The signal is in whether the user publishes. Every platform in this category has access to these signals. The ones that act on them will still be here in two years. The ones that keep chasing demo virality will follow Sora.

About Vmaker AI

Vmaker AI is a video creation platform built for social media creators and marketing teams. After learning from an open-ended AI video beta, Vmaker AI pivoted to focus specifically on vertical short-form AI avatar video — a decision driven by real user signal, real prompt data, and the conviction that Gen AI video only works when it is built for a specific use case, not every use case.